Expert-as-a-Service: Towards Efficient, Scalable, and Robust Large-scale MoE Serving

Sep 22, 2025· ,,,,,,,,,,,,,,,,,·

0 min read

,,,,,,,,,,,,,,,,,·

0 min read

Ziming Liu

Boyu Tian

Guoteng Wang

Zhen Jiang

Peng Sun

Zhenhua Han

Tian Tang

Xiaohe Hu

Yanmin Jia

Yan Zhang

He Liu

Mingjun Zhang

Yiqi Zhang

Qiaoling Chen

Shenggan Cheng

Mingyu Gao

Yang You

Siyuan Feng

EaaS Design

EaaS DesignAbstract

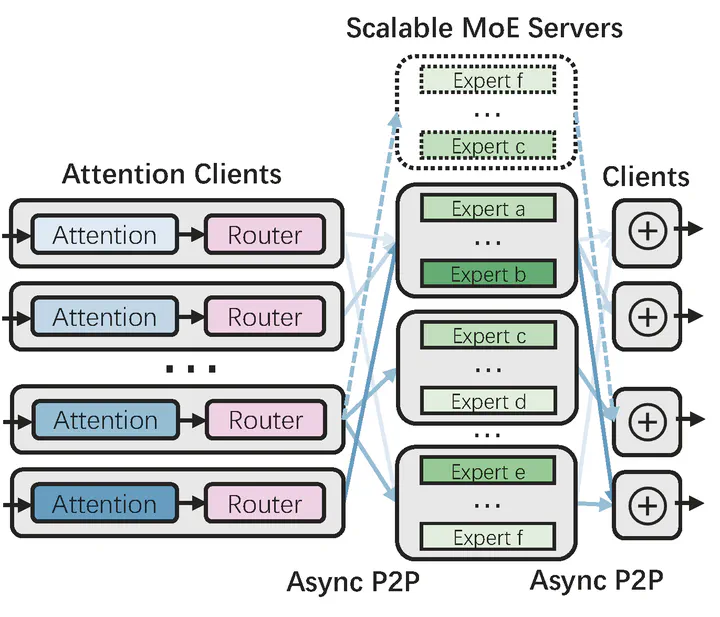

Mixture-of-Experts (MoE) models challenge serving infrastructures with dynamic, sparse expert utilization, causing instability on conventional systems designed for dense architectures. We propose EaaS, a novel serving system to enable efficient, scalable, and robust MoE deployment. Our system disaggregates MoE modules into independent, stateless services. This design enables fine-grained resource scaling and provides inherent fault tolerance by decoupling compute units. The architecture is powered by a high-performance, CPU-free peer-to-peer communication library that ensures minimal overhead and high throughput. Experiments confirm EaaS’s scalability and efficiency, achieving performance comparable to monolithic systems while providing robust fault tolerance and strong scalability. EaaS incurs less than a 2% throughput reduction under simulated hardware failures that would otherwise halt monolithic architectures. It further saves up to 37.5% of computing resources through dynamic fine-grained adaptation to serving traffic, demonstrating strong resilience for large-scale MoE deployment in production.

Type

Publication

Arxiv Preprint